FG-75

Cumulus

Cumulus

Project Details

- Project Lead

- John Bresnahan

- Project Manager

- John Bresnahan

- Institution

- Nimbus, Argonne National Lab

- Discipline

- Computer Science (401)

Abstract

The advent of cloud computing introduced a convenient model for storage outsourcing. At the same time, the scientific community already has large storage facilities and software. How can the scientific community that already has accumulated vast amounts of data and storage take advantage of these data storage cloud innovations? How will the solution compare with existing models in terms of performance and fairness of access?

Intellectual Merit

Provide later

Broader Impacts

Provide later

Scale of Use

Provide later

Results

Problem: The advent of cloud computing introduced a convenient model for storage outsourcing. At the same time, the scientific community already has large storage facilities and software. How can the scientific community that already has accumulated vast amounts of data and storage take advantage of these data storage cloud innovations? How will the solution compare with existing models in terms of performance and fairness of access?

Project: John Bresnahan at the University of Chicago developed Cumulus, an open source storage cloud and performed a qualitative and quantitative evaluation of the model in the context of existing storage solutions, and needs for performance and scalability. The investigation defined the pluggable interfaces needed, science-specific features (e.g., quota management), and investigated the upload and download performance as well as scalability of the system in the number of clients and storage servers. The improvements made as a result of the investigation were integrated into Nimbus releases.

This work, in particular the performance evaluation part was performed on 16 nodes of the FutureGrid hotel resource. It was important to obtain not only dedicated nodes but also a dedicated network for this experiment because network disturbances could affect the measurement of upload/download efficiency as well as the scalability measurement. Further, for the scalability experiments to be successful it was crucial to have a well maintained and administered parallel file system. The GPFS partition on FutureGrid's Hotel resource provided this. Such requirements are typically hard to find on platforms other than dedicated computing resources within an institution.

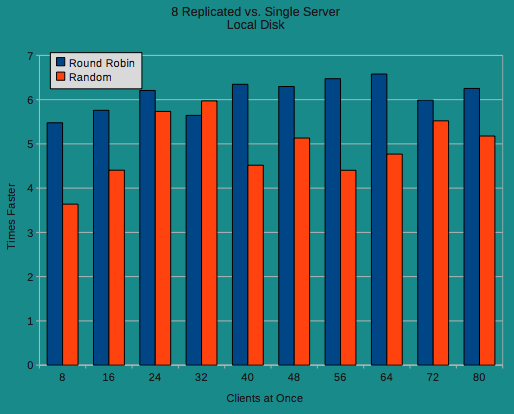

Figure: Cumulus scalability over 8 replicated servers using random and round robin algorithms

References:

- Cumulus: Open Source Storage Cloud for Science, John Bresnahan, Kate Keahey, Tim Freeman, David LaBissoniere. SC10 Poster. New Orleans, LA. November 2010.

- http://www.nimbusproject.org/files/cumulus_poster_sc10.pdf

Futuregrid is a resource provider for

Futuregrid is a resource provider for