|

FutureGrid Cloud Metric |

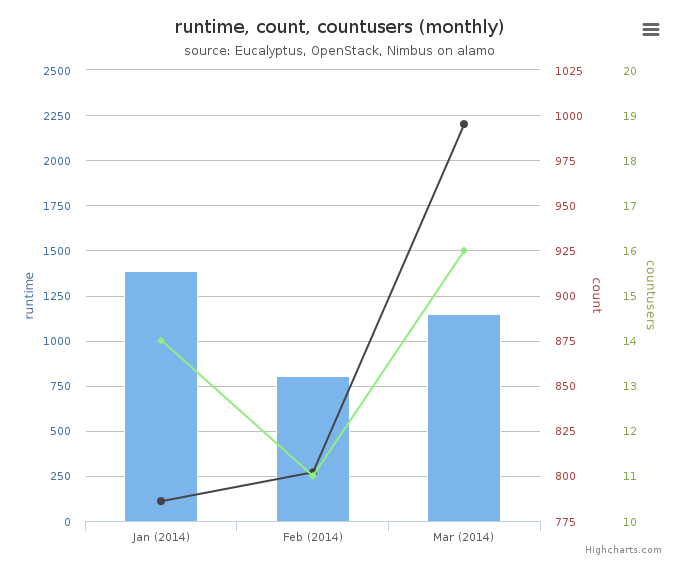

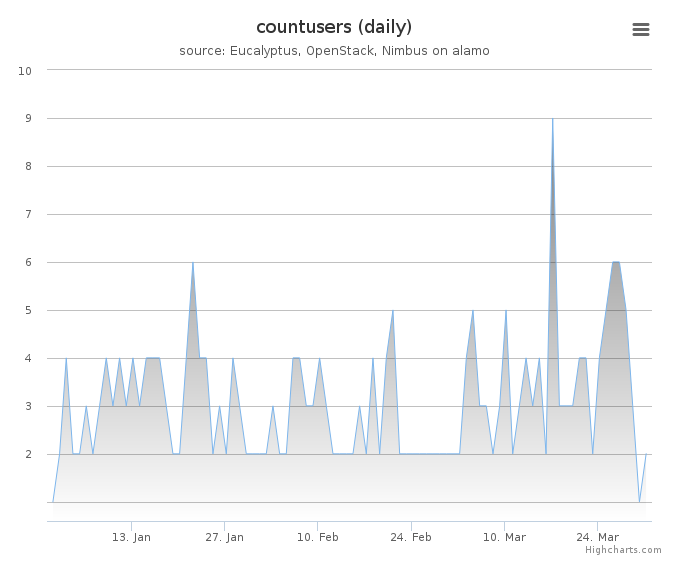

Period: January 01 – March 31, 2014

Cloud(IaaS): nimbus, openstack

Hostname: alamo

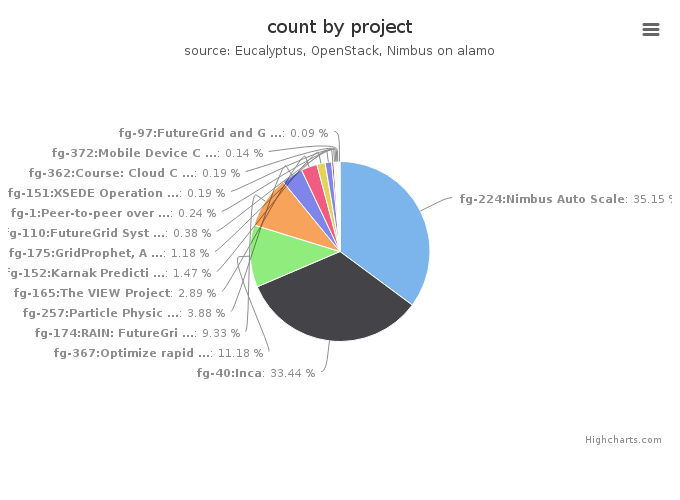

| Project | Value |

|---|---|

| fg-224:Nimbus Auto Scale | 742 |

| fg-40:Inca | 706 |

| fg-367:Optimize rapid deployment and updating of VM images at the remote compute cluster | 236 |

| fg-174:RAIN: FutureGrid Dynamic provisioning Framework | 197 |

| fg-257:Particle Physics Data analysis cluster for ATLAS LHC experiment | 82 |

| fg-165:The VIEW Project | 61 |

| fg-152:Karnak Prediction Service | 31 |

| fg-175:GridProphet, A workflow execution time prediction system for the Grid | 25 |

| fg-110:FutureGrid Systems Development | 8 |

| fg-1:Peer-to-peer overlay networks and applications in virtual networks and virtual clusters | 5 |

| fg-151:XSEDE Operations Group | 4 |

| fg-362:Course: Cloud Computing and Storage (UF) | 4 |

| fg-372:Mobile Device Computation Offloading over SocialVPNs | 3 |

| fg-392:Using Clouds to Scale GIS Applications | 2 |

| fg-382:Reliability Analysis using Hadoop and MapReduce | 2 |

| fg-97:FutureGrid and Grid‘5000 Collaboration | 2 |

| fg-42:SAGA | 1 |

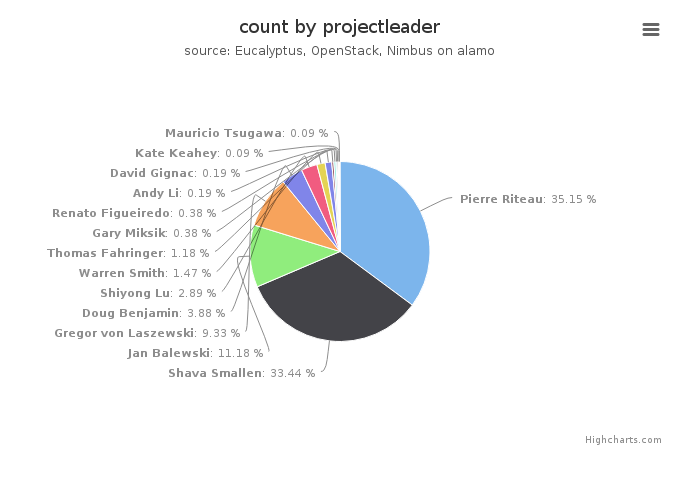

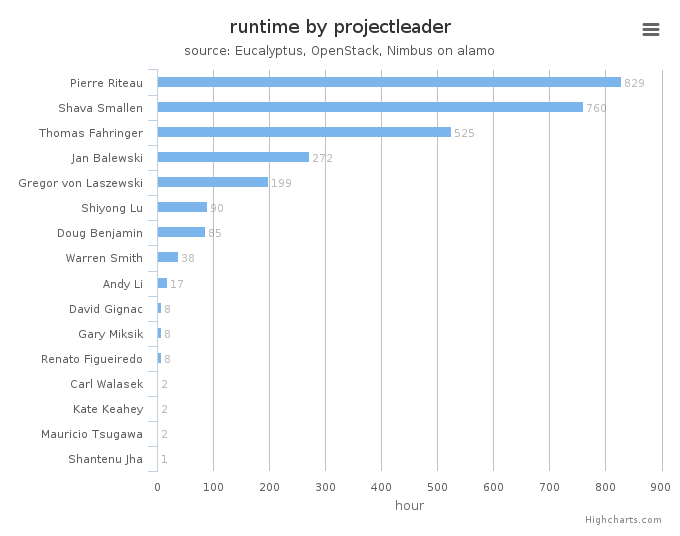

| Projectleader | Value |

|---|---|

| Pierre Riteau | 742 |

| Shava Smallen | 706 |

| Jan Balewski | 236 |

| Gregor von Laszewski | 197 |

| Doug Benjamin | 82 |

| Shiyong Lu | 61 |

| Warren Smith | 31 |

| Thomas Fahringer | 25 |

| Gary Miksik | 8 |

| Renato Figueiredo | 8 |

| Andy Li | 4 |

| David Gignac | 4 |

| Carl Walasek | 2 |

| Kate Keahey | 2 |

| Mauricio Tsugawa | 2 |

| Shantenu Jha | 1 |

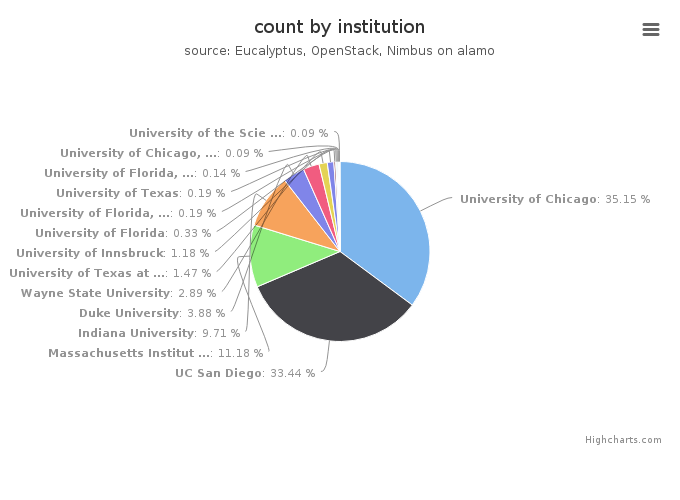

| Institution | Value |

|---|---|

| University of Chicago | 742 |

| UC San Diego | 706 |

| Massachusetts Institute of Technology, Laboratory for Nuclear Sc | 236 |

| Indiana University | 205 |

| Duke University | 82 |

| Wayne State University | 61 |

| University of Texas at Austin | 31 |

| University of Innsbruck | 25 |

| University of Florida | 7 |

| University of Florida, Department of Electrical and Computer Eng | 4 |

| University of Texas | 4 |

| University of Florida, Electrical and Computer Engineering | 3 |

| University of Chicago, Computation Institute | 2 |

| University of the Sciences , Mathematics, Physics, and Statistic | 2 |

| Louisiana State University | 1 |

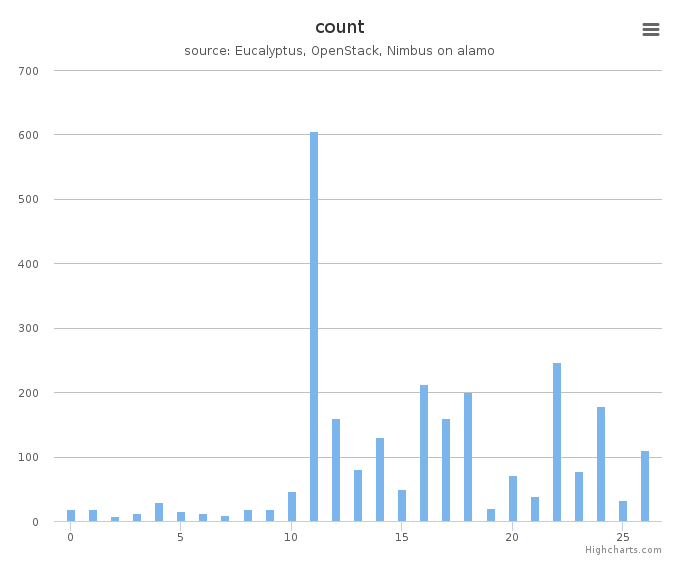

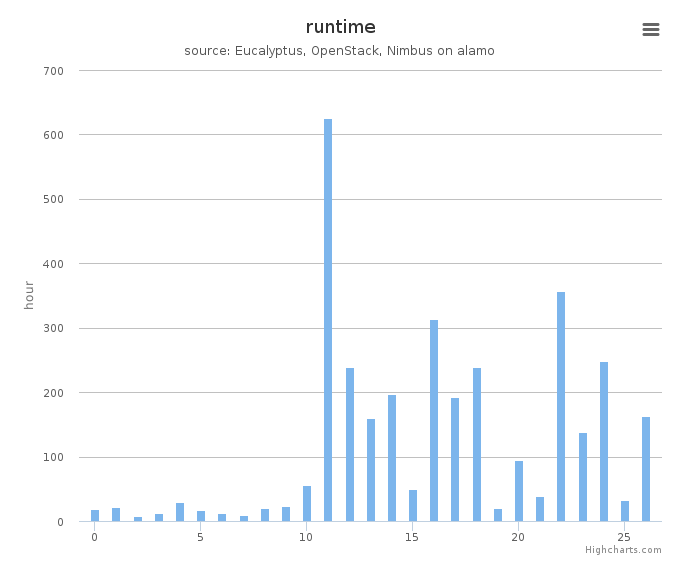

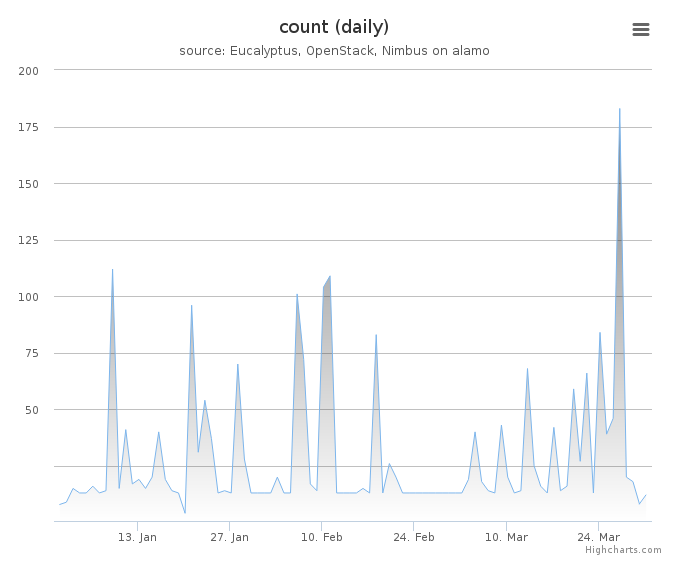

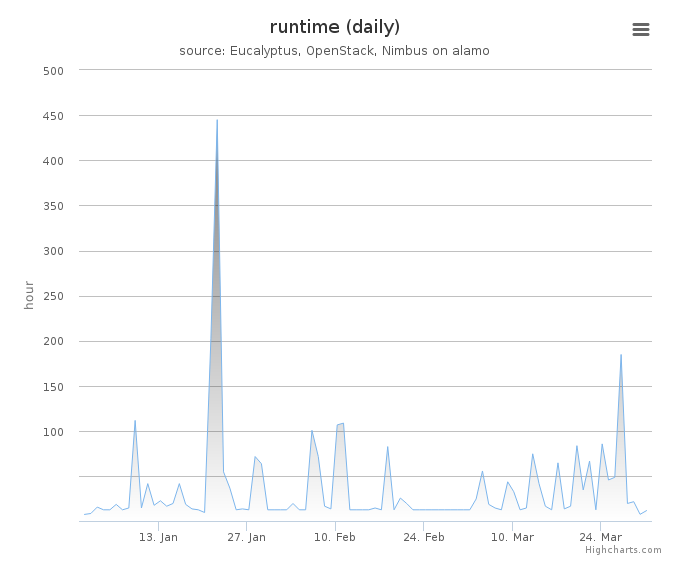

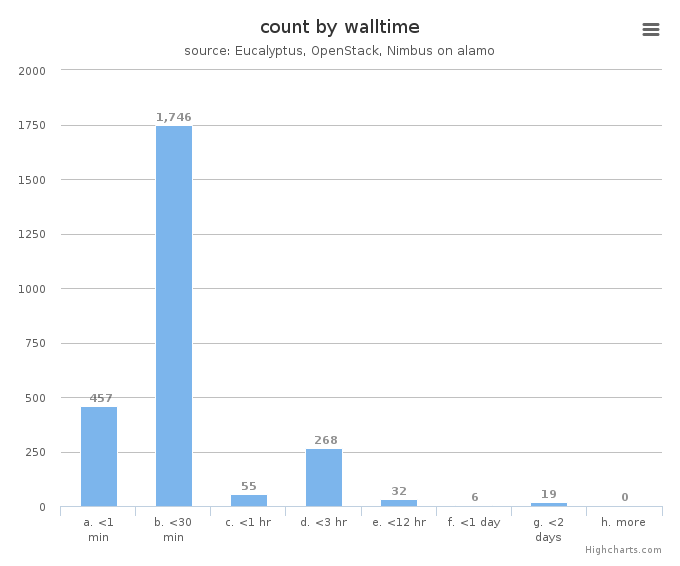

System information shows utilization distribution as to VMs count and wall time. Each cluster represents a compute node.